Co-creating music with Agents

An exploration into what it means to make music with AI as a creative collaborator. The central question: how do you design a tool where the AI has genuine agency in the creative process, while the human still feels connected to what's being made?

My contribution

The team

Solo

Concept

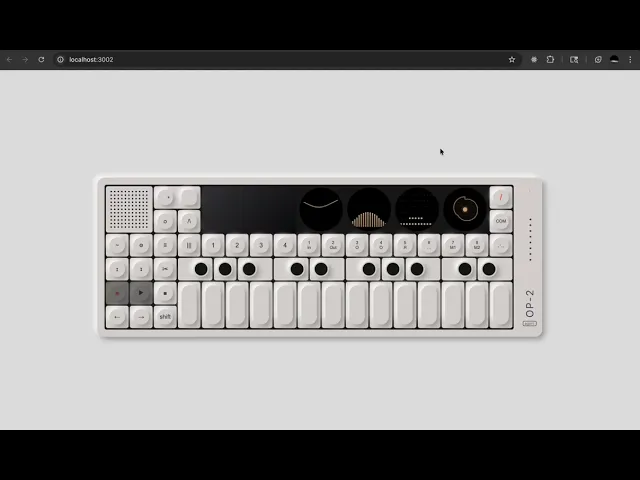

The interface is modeled after the Teenage Engineering OP-1, a synthesizer known for its constraints and playfulness. You can interact with the keys, speak, record audio, and give Claude loose direction (“chill lofi beats”). Claude listens to your cues and jams alongside you, making decisions about instruments, progressions, and timing. The screen streams its reasoning so you can watch the creative choices unfold. This project sits within a broader interest in building artifacts and software for creative expression with AI. The aim is tools that feel like jamming with another musician.

How the System Works

Project Architecture

The architecture treats music creation as a conversation. Users can play notes on the keys, speak instructions, record audio, or type a prompt. These inputs flow into Claude, which interprets them as musical cues. Claude then decides how to respond: maybe adding a bass line that complements what you just played, or shifting the tempo based on something you said.

The agent runs in a loop. It listens to your cues, interprets your intent, selects the right tool (creating a sequence, changing the key, adjusting BPM), executes it, and waits for your next move. The tool interface connects to the ToneEngine, which handles both acoustic instruments through 128 GM soundfonts and electronic sounds through Tone.js synthesizers. Everything syncs to a shared clock so the timing stays tight.

The UI streams Claude's reasoning as it jams with you. You see "Listening... Heard C major chord... Adding bass line to complement" so the collaboration feels transparent. The circular flow (you play, Claude responds, you hear and react, Claude adapts) creates the feeling of jamming with another musician rather than issuing commands to a generator.