Eternal Sunshine of a Slopless Mind

Mar 9, 2026

I, along with many others have started to build software with AI this year. You've probably seen the demos. They all blur together after a while. Someone is talking to an agent. Things appear on screen. Forty minutes later, there's a "working" app. It's hard to tell what was decided, what happened, and who any of it was for.

I've watched dozens of these demos this year. To be completely honest, I've made some myself. In one demo I watched recently someone built a tool that scraped Reddit. Processed the data. Displayed it in a dashboard to aid some sort of performance marketing of their “turboslop” app.

The whole thing from start to finish took about forty minutes. The app worked. When demoing the creator scrolled through and clicked a few tabs. They paused, unsure of what to do next “...and that’s it”. The comments on the post are rapturous with chants of: “This changes everything”, “Software engineering is dead”, “Everyone just became a 100X engineer”.

A few weeks later, I went looking for it. The repo hadn't been touched since. The dashboard wasn't running. I don't think anyone ever used it, including the person who made it. The forty-minute app. The weekend project. The breathless post that exclaims "I can't believe AI one shotted this." ...I can, because no one's using it. For most of these abandoned projects the demo was the product.

Speed as its own justification. The performance of producing artefacts of pure velocity.

The fog

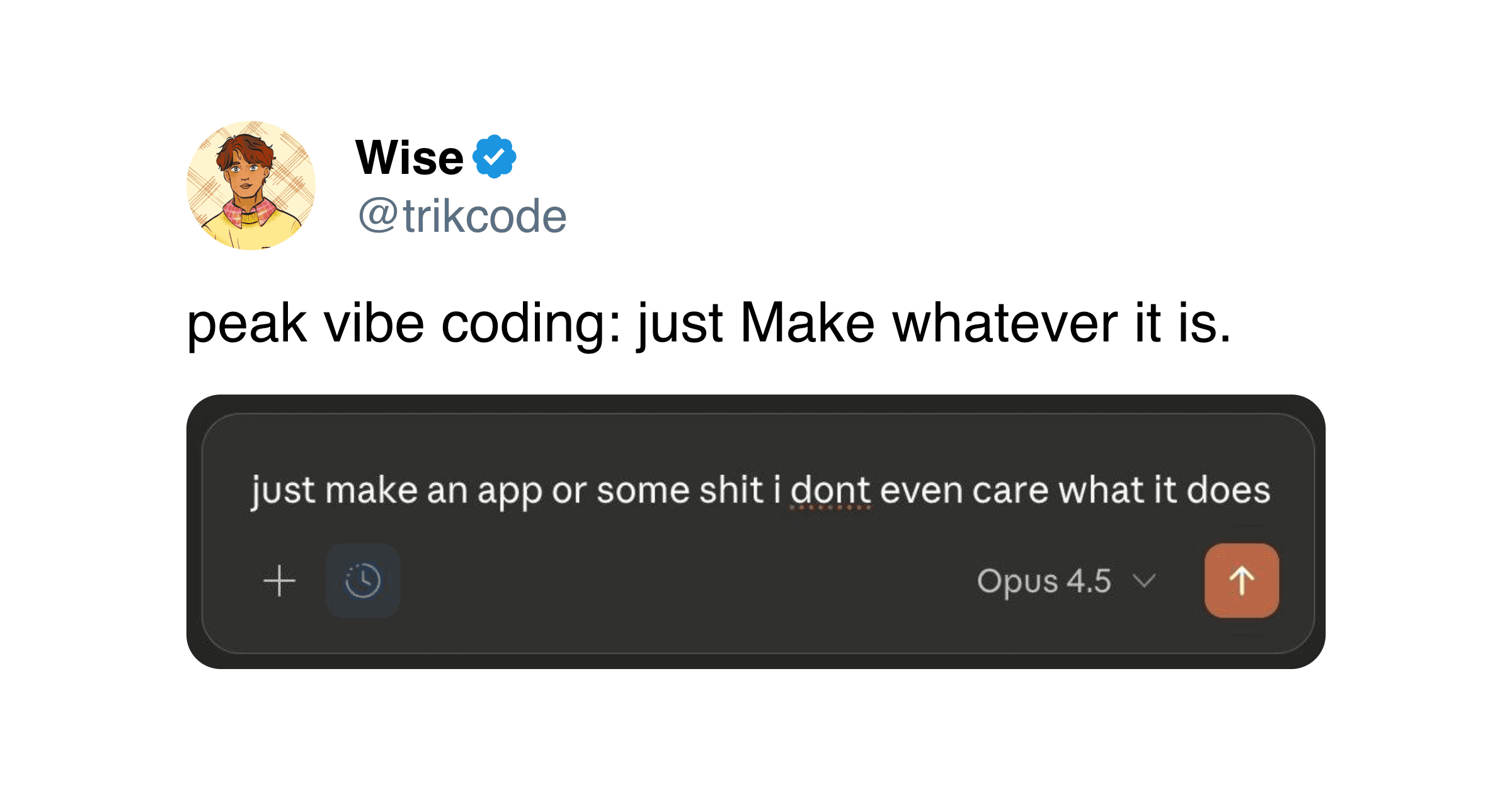

When you can build anything in forty minutes, you tend to skip the part where you figure out what's worth building. The speed carries you past the question of purpose. You're three features deep before you realise you never decided what it was for.

This is not a failure of the tools. They did exactly what you asked for. The issue is the lack of push back in the process. No friction or contact with the people you’re making it for. Just you and the machine in a loop where every idea gets a resounding “You’re absolutely right!”.

In slower ways of working, reality has a way of intruding whether you wanted to or not. You wrote a brief; someone asked who the audience was. You showed a wireframe; a teammate said they didn't understand what it does. You put something in front of a user and watched them ignore the feature you thought was the whole point. These moments were annoying but they anchored your work in reality.

Inhabiting a sealed room

We become inhabitants of sealed rooms when nothing in our process represents anyone other than ourselves. No collaborator closer to the user than you are. No user who behaves differently than you expected. No moment where the work makes contact with the world outside and has to survive it.

The creator in a sealed room isn't lazy. They're often working hard, prompting, iterating and adjusting. However, the iteration happens in a closed loop. The only feedback comes from the machine, and the machine will build whatever you ask without questioning. A yes-man with infinite patience and zero judgment. You push. Nothing pushes back. So you keep pushing. Faster. Further. Hurling yourself in whatever direction you happened to be facing when you started.

Maybe part of the problem is that your computer is a single player instrument. Trackpad, keyboard, mouse; all steered by one pair of hands. Collaboration in software has always been a layer on top of these instruments. AI as it's currently built doubles down on this. The promise of tools like OpenClaw is that you don't need anyone else. You can go from an impulse to an artefact without grounding your creation.

There's a quality to work made this way. You can tell. I've started recognising it in other people's projects and also in my own. Everything runs well enough. The design is competent. It looks and feels like a real product. But there's a strange emptiness to it, like a model home that's furnished, clean and uninhabited. A product that was never disagreed with.

You can build a lot in a sealed room. But, you just can't build anything meaningful in service of others.

Grounding

Last week, I built a website for an exhibition @sairamved was a part of. We sat down together to work through it, OpenClaw writing code in the background while we talked. It took all afternoon.

We disagreed about the landing page. Whether to open with the artist's statement or drop visitors straight into the installation details. I assumed the statement came first. It felt right. Sai thought that was wrong. He said anyone visiting the site already knew what the show was about. They just wanted practical information.

We went back and forth for a while, but he was right. I was building for how I understood the project. Sai was a participant for whom the page was for.

Either of us could have made something alone in an hour. But working with AI in a sealed room, I would have missed this. It doesn’t know who the audience is and neither do I. It would have built whatever I asked for. The landing page would have been beautiful, polished, but also wrong. Sai introduced a perspective I was blind to, the perspective of the person the project was in service of. The disagreement was the moment the work made contact with reality and grounded itself.

The final site opens on installation details. Dates, location, what to expect when you walk in. The artist's statement is there if you want it but it's not the front door. Everyone visiting already knew what the show was about. They just needed to know where and when. If I'd built it alone, the statement would have been the first thing you saw.

The afternoon was slow, sometimes frustrating. But each decision in that website survived the scrutiny of the person it was actually for. The work was better for every extra minute it took.

Flashlight vs Street lamps

If you want to light a dimly lit street, you don't hand every person a flashlight. You install street lamps.

A flashlight helps one person move. A street lamp lets everyone see where they are. Right now, the entire AI tooling ecosystem is flashlights. Individual productivity. Your personal copilot. Your agent. The assumption is that if each person moves fast enough, good work follows. It doesn't. You just get a lot of people running in the dark.

The speed doesn't have to point inward. When Sai and I sat down, every time we disagreed we could see the result in minutes. Check it against what we actually meant, and keep going.

We should use this newfound velocity in service of grounding. You stay in the room with the person the work is for. The AI makes the artefact fast enough that it lives inside the conversation.

The street stays lit. Everyone can see where they're going.

Against artefacts of pure velocity

The entire conversation about building with AI has collapsed into a single metric: speed. Prompts per hour. Features shipped. Time to first deploy. The tools now allow us to move faster. But nobody seems to be asking; is the work being produced any good? Will the work will last? Does anyone even need it at all?

I've sat in a sealed room, building fast, producing things that worked but meant nothing. I've sat in a park, building slowly, arguing about a landing page. The work that came out of the park was better for every minute it took. The friction of grounding my creation with the people it's in service of is slow but more meaningful.

Where are all the things people built in forty minute demos? What happened to them? When I look around, all I see is a lot of quickly built empty model homes. Doors in all the wrong places and nobody in the room to say so.